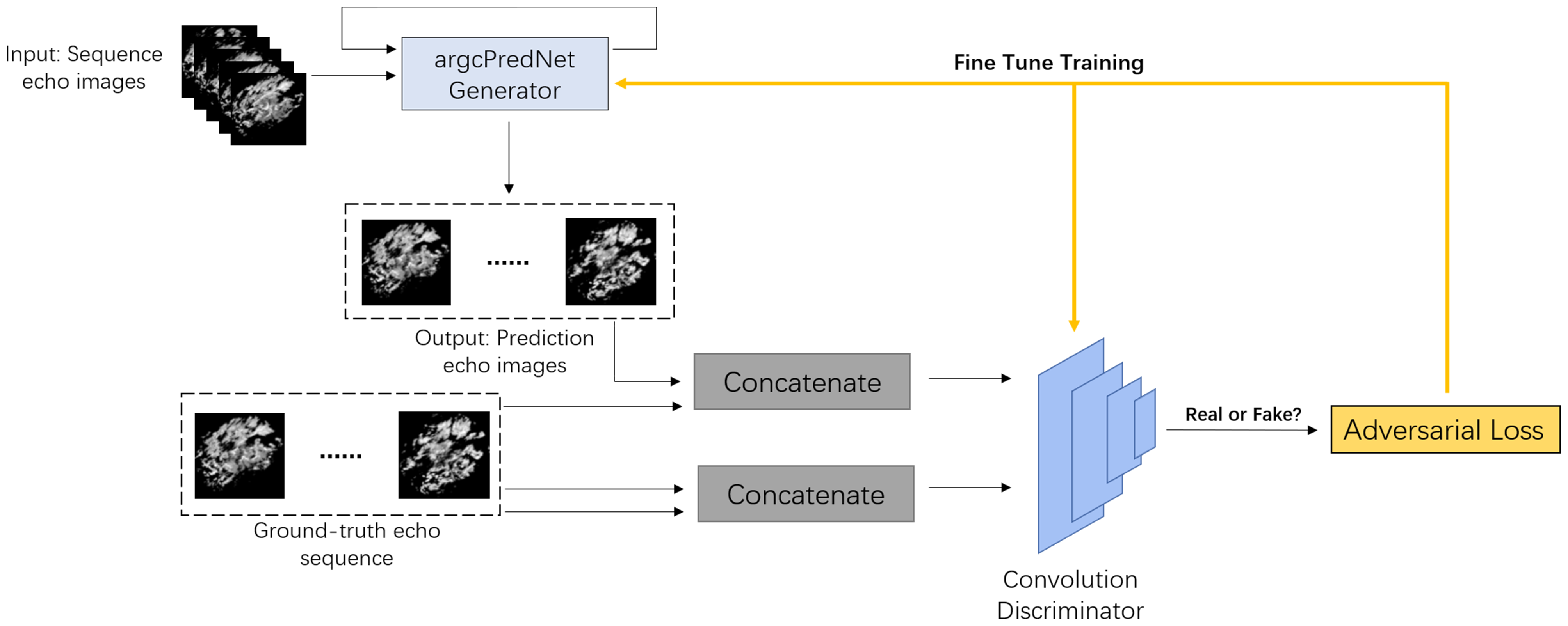

In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR 2020, Seattle, WA, USA, 13–19 June 2020, pp. 12872–12881 (2020)ĭeitke, M., et al.: RoboTHOR: an open simulation-to-real embodied AI platform. (2020)Ĭhaplot, D.S., Salakhutdinov, R., Gupta, A., Gupta, S.: Neural topological SLAM for visual navigation. In: 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020. In: Advances in Neural Information Processing Systems 33: Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020, December 6–12, 2020, virtual (2020)Ĭhaplot, D.S., Gandhi, D., Gupta, S., Gupta, A., Salakhutdinov, R.: Learning to explore using active neural SLAM. (2021)Ĭhaplot, D.S., Gandhi, D., Gupta, A., Salakhutdinov, R.R.: Object goal navigation using goal-oriented semantic exploration. 3514–3522 (2020)Ĭao, T., Xu, Q., Yang, Z., Huang, Q.: Meta-wrapper: differentiable wrapping operator for user interest selection in CTR prediction. In: MM 2020: The 28th ACM International Conference on Multimedia, Virtual Event/Seattle, WA, USA, 12–16 October 2020, pp. arXiv preprint arXiv:1807.06757 (2018)Ĭao, T., Xu, Q., Yang, Z., Huang, Q.: Task-distribution-aware meta-learning for cold-start CTR prediction. CoRR abs/1503.08677 (2015)Īnderson, P., et al.: On evaluation of embodied navigation agents. Īkata, Z., Perronnin, F., Harchaoui, Z., Schmid, C.: Label-embedding for image classification. The experimental results on AI2THOR and RoboTHOR simulators demonstrate the effectiveness of the proposed method in navigating to unseen object categories. Moreover, to fast update the generator with a few observations, the entire adversarial framework is learned in the gradient-based meta-learning manner. The adapted features serve as a more specific representation of the target to guide the agent. The latter enables the generator to further learn the background characteristics of the new environment, progressively adapting the generated features to approximate the real features of the target object. The former generates the initial features of the unseen objects based on the semantic embedding of the object category. We propose a generative meta-adversarial network (GMAN), which is mainly composed of a feature generator and an environmental meta discriminator, aiming to generate features for unseen objects and new environments in two steps. Our solution is to let the agent “imagine" the unseen object by synthesizing features of the target object. Same as the common ObjectNav tasks, our agent still gets the egocentric observation and target object category as the input and does not require any extra inputs.

In this paper, we focus on the problem of navigating to unseen objects in new environments only based on limited training knowledge. However, this setting is somewhat limited in real world scenario, where navigating to unseen object categories is generally unavoidable. Prevailing works attempt to expand navigation ability in new environments and achieve reasonable performance on the seen object categories that have been observed in training environments.

Object navigation is a task to let the agent navigate to a target object.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed